Learnings for the week 9/29/2019

Interesting bits from the previous week

Fortnite Highlights

I love ESPN highlight reels. What if a computer could automatically watch a game and create highlight reels all by itself.

That is the problem my friend Hemanth Sagar and I attempted to tackle a couple years ago. Hemanth built a system to “watch” a tennis match and turn 6 hours of gameplay into the key and most exciting 6 minutes. I’ll have a post on that soon.

That got us thinking — what if we could do the same for eSports…

First — why would that be interesting? Well the top Twitch Streamers need to create content (play video games), show off their skills and create a community. What better way to create community than making a highlight reel of you playing the various people you compete with on Fortnite.

Think about it this way, how much would I love to have a clip of me playing basketball against LeBron James? I’d kill for one — I’d show it to everyone even though everyone would see LeBron dunk on me literally and figuratively.

Today’s kids play eSports and unlike me vs LeBron some of them can actually beat their heros — and have video evidence.

Here again Hemanth led the charge and together we built a system for watching Fortnite from streamers like @Ninja and extracting highlight clips that get automatically tweeted out at the streamer and the competitor.

YouTube version of the clip:

So what are we seeing:

Computer watches a Twitch stream (e.g. 8 hours of Fortnite gameplay)

Computer finds the key moments (the kills)

Computer extracts the text on the screen (maybe @80% quality) to figure out who did what with what

Computer automatically creates the GIF and tweets it

What is the input?

A single 8 hour Fortnite stream. That is what is impressive — there is no secondary data stream showing the key moments, there is no text feed showing what words appear on screen — all of this has to be extracted from the video.

A sincere thank you to Hemanth for the years of partnering together on fun projects. Hemanth is an incredible engineer and we have a few more up our sleeve.

I found Sapiens to be a tough read — I never got into the grove with this book — but it was informative and had lots that I had never thought about.

The key points of the book are very well summarized in bullet points here by Maksim Stepanenko: http://maksim.ms/blog/book/2016/sapiens/ I kinda wish I had just read this and called it a day.

What a fun, fast read on the history of the Skunk Works, the building of the U2, the stealth planes, the SR-71 and overall an incredibly well run organization delivering on time and on budget.

The writing and storytelling is excellent. One favorite bit from the book: The key math/physics research behind stealth technology that lead to the odd/flat shapes of stealth planes/boats comes from a Russian mathematician. A Russian mathematician that published his paper during the Cold War. It was paper that few read and seem benign so Russia allowed it to be published more broadly. And it was that paper that was read almost 10 years later that led the Skunk Works to their major breakthrough on developing stealth planes. Said differently, in the middle of the Cold War the Russians figured out the key to stealth technology, didn’t know it, and shared it with the world.

Manipulating Photos to Improve (Computer Vision) Readability

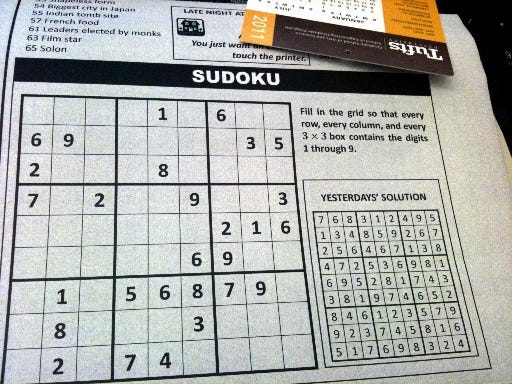

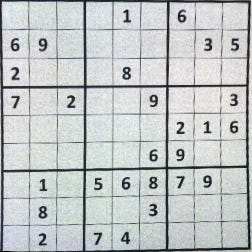

Say you take a photo of the newspaper like this:

And you want to clean it up — how in code can you manipulate the image to get:

Apurva Mehta has a simple blogpost showing how to use OpenCV to do just this: https://amehta.github.io/posts/2019/09/select-sections-from-images-of-newspaper-clippings-receipts-etc-using-opencv-and-python/